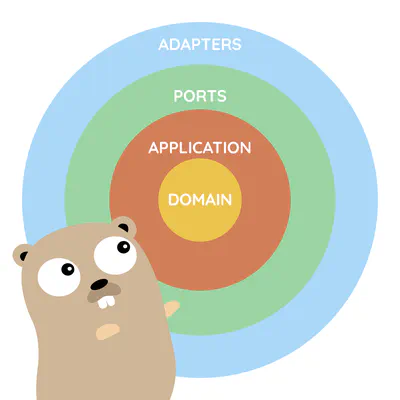

Learn building professional Go software

Learn building maintainable Go software

Learn building modern Go software

Learn well-proven patterns and techniques we used across multiple teams and projects over 15 years.

Our initiatives

Blog

44 in-depth articles about Go and advanced backend patterns over the last 8 years. 270k+ yearly visitors

Watermill

Your standard library for building event-driven applications the easy way in Go. 9,748 GitHub stars

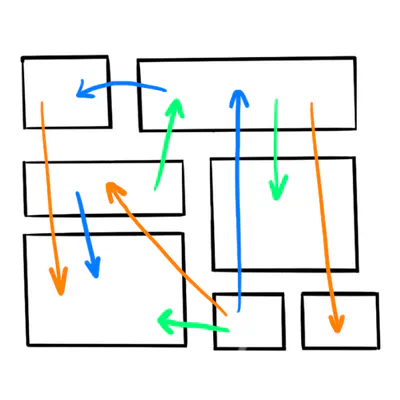

Wild Workouts

A complete Go DDD example application. 6,338 GitHub stars

Our Online Training

Learn Go from scratch to advanced patterns by creating real-life projects. 7,200+ trainees

Newsletter

Always be up-to-date with our initiatives. 18k+ subscribers

Go With The Domain

An e-book about building Modern Business Software in Go 60k+ downloads

The most popular blog posts

If you are new here, start with these.

Latest posts

Latest live podcast episodes

Let's stay in touch

Be the first to know about new posts on our blog. We also plan to organize events like live coding sessions. You will never miss it!

Joining our newsletter helps us stay independent of any social media algorithms. We'll deliver the updates directly to you.